Providing multiple flexible methods to access a neural processing engine where the user has full customisation of applications and post-processing, the Syntiant® Neural Decision Processor™ (NDP) architecture is built from the ground up to run deep learning algorithms for speech recognition. By utilising highly coupled computation and memory, these devices achieve approximately 100x efficiency improvement over stored program architectures such as CPUs and DSPs.

The NDP101’s programmable deep learning network supports dozens of application-defined audio sequences for a variety of use cases, including keyword speech interface, wake-word detection, speaker identification, sensor applications, and audio event and environment classification. Programmable GPIO, SPI Master, and the ability to boot from a serial flash enable the NDP101 to be the only processor in the system, saving space and BOM cost.

The system-on-chip comes in a 32-pin QFN package where eight of the GPIO pins have programmable direction and drive strength. There are dual inputs for PDM microphone or PCM-over-SPI, and the stereo/mono I2S interface is multiplexed with PDM. The embedded processor is an Arm Cortex-M0 with 112 kB SRAM, and integrated clock multiplier and dividers support low-frequency clock source or external clocking. With active power consumption of <140 µW while recognising words, this device can certainly meet the requirements of the most demanding low-power applications.

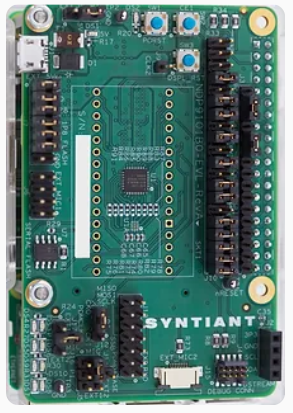

To evaluate this technology, Syntiant created the NDP9101B0 development platform, which is implemented as a Raspberry Pi shield with easily configurable jumpers to connect a number of different microphones and sensors. As well as the NDP101B0 chip, the board includes serial flash (500 kB), dual IM69D120 Infineon microphones, a programmable Si5351A clock IC, and 4 LEDs for I/O display. The kit includes a Raspberry Pi 3B+, power supply, pre-configured micro-SD card, and Alexa wake word model for an easy start to evaluating the technology.

Syntiant also provides extensive software development tools to help evaluate the Neural Decision Processors, including a Training Development Kit (TDK) and an SDK that contains the tools and software required to control and operate Syntiant NDP devices.

This certainly seems like an interesting chip that could be integrated into a great many devices. The thing I always wonder about speech-recognition devices is what the limits are on the language processing. How does it eventually fare with tonal or particularly plosive languages. I suppose I mainly wonder whether it can tell the difference between the Thai words for ‘dog’ and ‘horse’, or Japanese words for ‘like’ and ‘the Moon’. Maybe someone here can let me know.

Keep designing!

(Image sourced from Syntiant)